The Problem

I start as an assistant professor of political science at the University of South Carolina in the fall. Because my future employer has signed a $1.5 million contract with OpenAI to provide students free access to ChatGPT, I’ve been thinking—perhaps too much—about how to design my AI course policy. Most of those I’ve encountered fall into one of two camps: ban it entirely or ignore it and hope for the best. Neither approach seems sustainable. University partnerships with AI companies (OpenAI, Anthropic, Microsoft, Google, etc.) have exploded in the U.S. and show no signs of slowing down. Outright bans have grown disconnected from the infrastructure students are now handed, and pretending the tools don’t reshape how students engage with coursework is naive at best, harmful at worst.

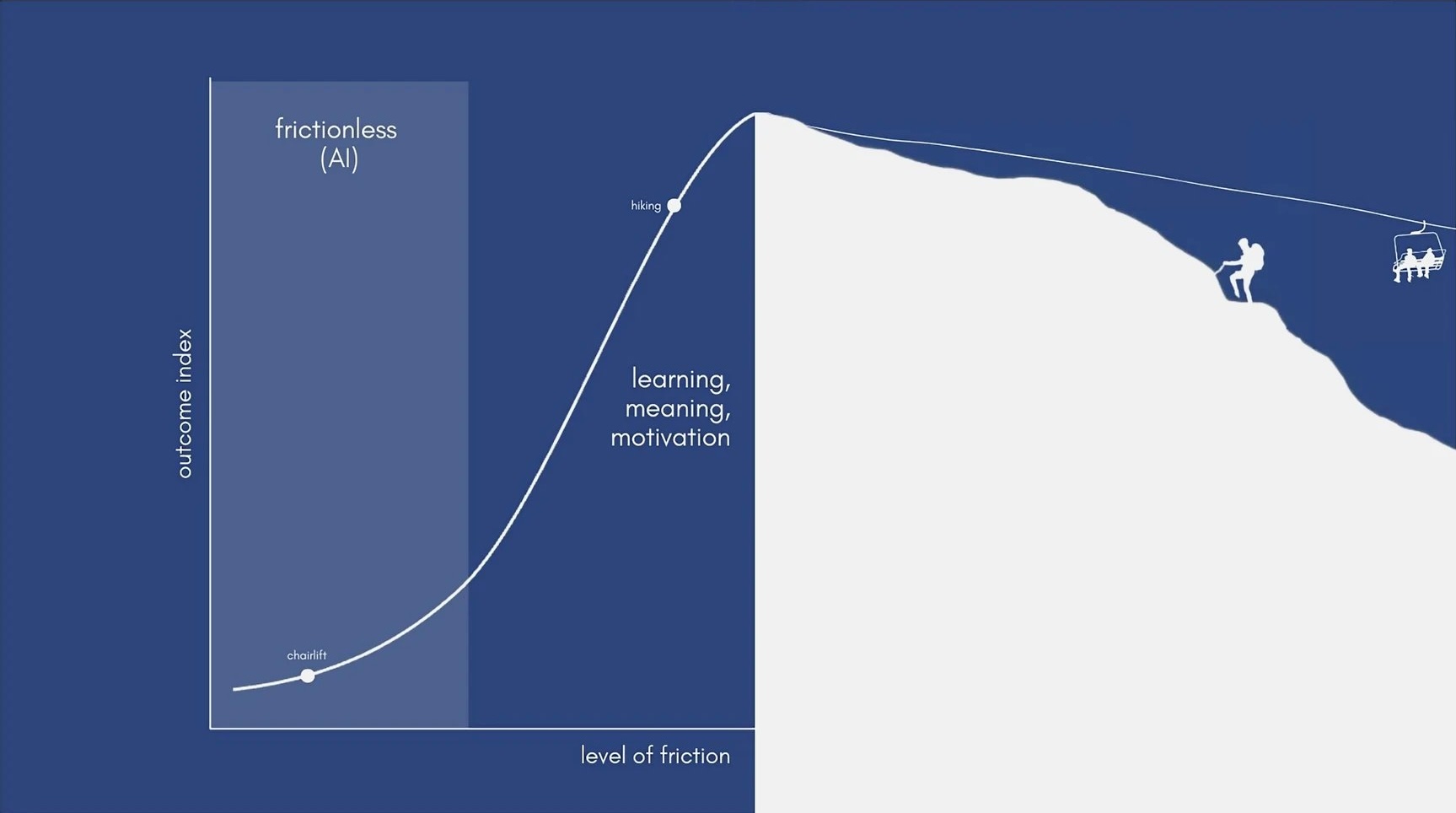

There is an emerging consensus forming around what makes the use of generative AI in the classroom problematic, and it isn’t cheating in the narrow sense—though that is certainly still a problem. The deeper issue is that these tools offload the thinking process itself, specifically the metacognitive work of wrestling with your own reasoning, sitting with uncertainty, and updating your priors. That friction is where genuine understanding develops. When some large language model (LLM) drafts your argument for you, the product might look fine (to students, less so to professors…), but the learning never happened. Students who use these tools to generate solutions to exercises are exposed to a wider range of topics at the cost of meaningful understanding, whereas those who use the tools to provide explanations grasp the material at a deeper level while pacing topic volume, as one study observes. Prioritizing outcome over process, quantity over quality, and ease over friction is precisely what makes AI’s greatest strength also its greatest liability.

If the core problem is the loss of beneficial friction (and the lack of capacity to distinguish it from excessive friction), then the solution should be to reintroduce desirable difficulties into how students interact with the models themselves. Exerting effort, of course, is often what makes life meaningful—to rebel against the apparently meaningless nature of the world, as Camus once wrote.

My Approach

In my World Constitutions course this fall, students will be allowed to use AI—but only after completing a structured template that forces them to think before they prompt. Throughout the course of the semester, students will write weekly discussion posts using the readings to analyze how their country’s constitution (chosen at the start of the semester) addresses the topics under study that week. They will write all their posts in a shared Word document, which has version history. I will encourage students to write these posts without AI assistance, but if they must do so, the template is required (see below).

Instructions for AI: I am an undergraduate student working on my own argument. Your job is to be a critical thinking partner. Identify logical gaps or tensions in my reasoning, suggest alternative interpretations I may not have considered, and raise objections to my argument. Do not give me “the answer” or draft my response.

My question: What am I trying to figure out?

My current position: What do I think the answer is, even if tentative. (“I’m not sure” is not acceptable. Take a stance.)

My reasoning: Why do I think this? What evidence or logic supports my view? (2-3 sentences minimum.)

What I want from you: (Choose one) Stress-test my reasoning and identify gaps / Help me see alternatives I haven’t considered / Check whether I’m understanding a concept correctly / Other:

The AI’s instructions are explicit. It is to act as a critical thinking partner. It should not give the answer. It should not draft a response. There’s a diagnostic element built in as well. If a student can’t fill out the template, that tells them something useful. It means they need to do more reading or come to my office hours—not that they need a better prompt.

Accountability Without Surveillance

My goal is not to police students’ use of generative AI tools, but to ensure they develop the thinking skills that make such tools useful rather than harmful. My political education—and the readings that have informed my pedagogy—has made me averse to surveillance. Moreover, on a practical level, time wasted on monitoring students’ use of AI (a losing battle) could otherwise be spent fostering trust and goodwill with them.

I’m pairing the template with a brief oral-check during the semester, where each student walks me through the reasoning behind their final paper. For this research project, I ask them to develop an original argument about their country’s constitutional system, building on the work they have done for their weekly discussion posts. The check-in is pass/fail. I will ask questions like “How did you arrive at this argument?” or “What alternatives did you consider?” Students just need to be able to walk me through their most recent thinking. No interrogation, no games—just a lightweight mechanism designed to make open and honest engagement the path of least resistance.

A Work in Progress

If these tools are here to stay, I think we have a pedagogical obligation to be transparent with students about something uncomfortable: we, as instructors, are still figuring out how to use them well. Pretending otherwise doesn’t help anyone. Part of what I want to model in this course is that thoughtful experimentation is a legitimate response to a genuinely new problem.

I’m not claiming this is the answer. I’m trying it for the first time this fall. Ask me in December how it went.